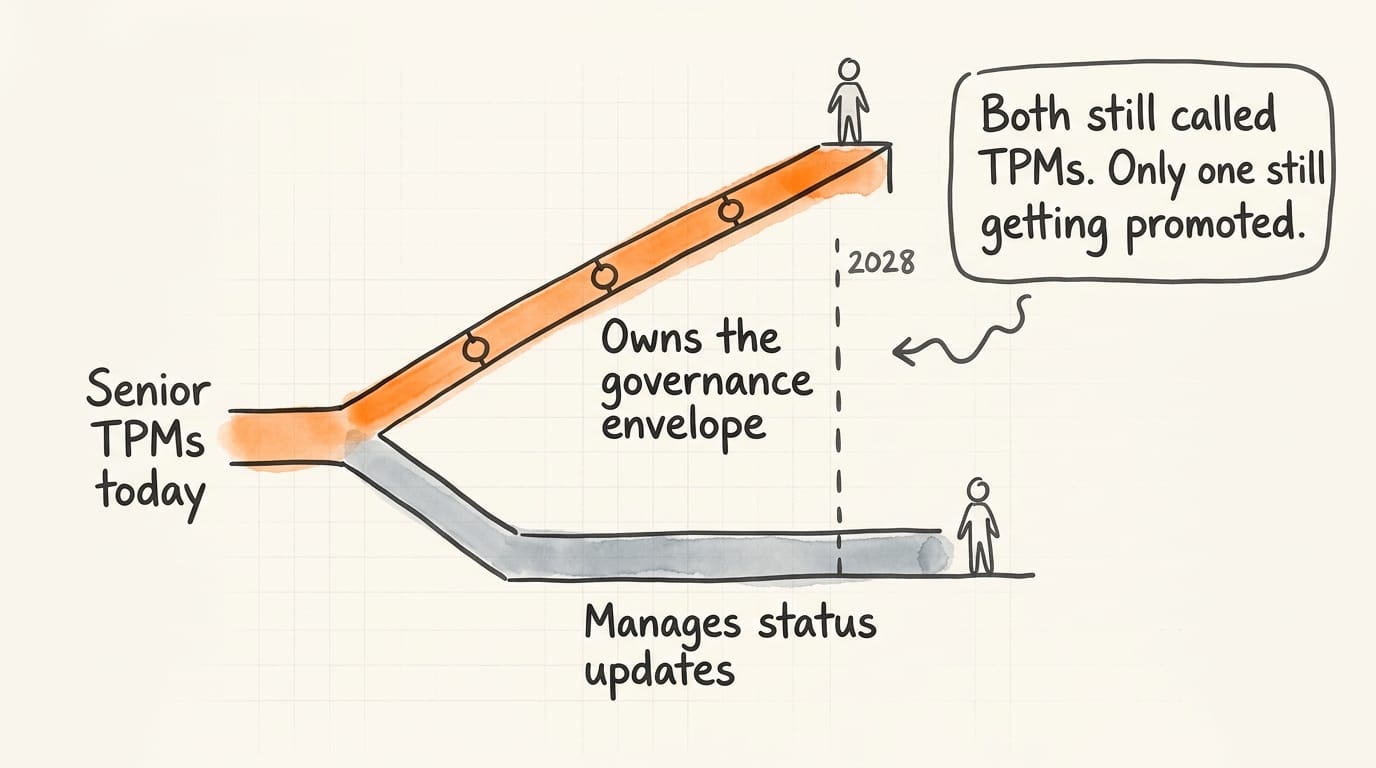

The TPM role is bifurcating. Total headcount still projects upward through 2030 – the number isn't wrong. What it hides is the split underneath. On one side, TPMs who coordinate human-agent programs and own the governance envelope around non-deterministic systems. On the other, TPMs who manage status updates an agent could write in twelve seconds. Both groups will still be called TPMs. Only one will still be getting promoted in 2028.

This week sharpened the picture. Microsoft put an open-source agent orchestration framework into public preview. Copilot Studio's latest release pushed agent authoring into no-code territory. Somewhere in your organization, or one a lot like it, a team is already running half a dozen agents across delivery – governed by nothing more formal than a shared Slack channel and a prayer. That last part is where the career leverage lives.

Executive Summary

Three things worth keeping in your head:

The orchestration layer is consolidating. Microsoft's Agent Framework joins Google's A2A Protocol and Anthropic's Model Context Protocol as serious candidates for the plumbing underneath multi-agent systems. You don't need to pick a winner – you do need to stop treating agents as point solutions and start treating them as substrate.

The coordination work you've been doing by hand is being commoditized. Status summaries, risk flagging, meeting prep, onboarding docs – what fills your Tuesdays is exactly what no-code agent builders absorb. Teams are shipping it now.

The governance problem is wide open. Agents don't return the same output twice. Agent-A's output becomes agent-B's prompt, and chains drift. Nobody on your org chart currently owns this. That vacancy is the career opening – if you're willing to earn the prerequisites to claim it.

The rest of this issue goes deep on the third. It's where the evidence is strongest and where most TPMs are furthest behind.

Why This Contradicts Conventional Wisdom

Traditional program management assumes a boring kind of determinism. You plan a sprint, the team executes, you measure variance, you adjust. Tasks flow sequentially. Dependencies map hierarchically. The TPM sits in the middle as the human router.

Multi-agent systems break that model in three ways, each one a governance question someone now has to answer.

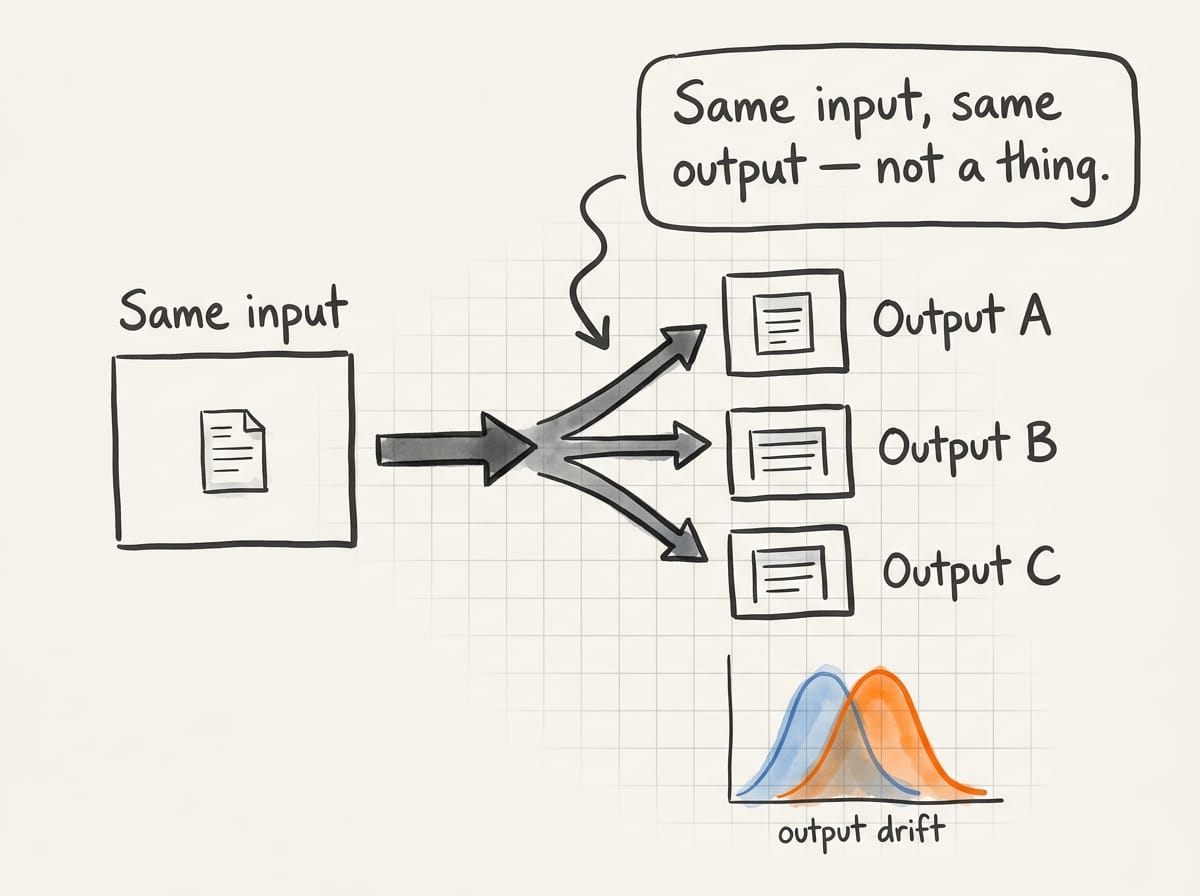

The first break is non-determinism. An agent that summarizes a status report today won't produce the same summary tomorrow, even with identical inputs. Temperature alone guarantees it. You can version the prompt, the model, the tool set, and the context window – the output still drifts. How do you regression-test a system that is supposed to vary? How do you diff two versions of an agent when "same input, same output" isn't a thing? Engineering hasn't solved this. QA hasn't either. Someone has to.

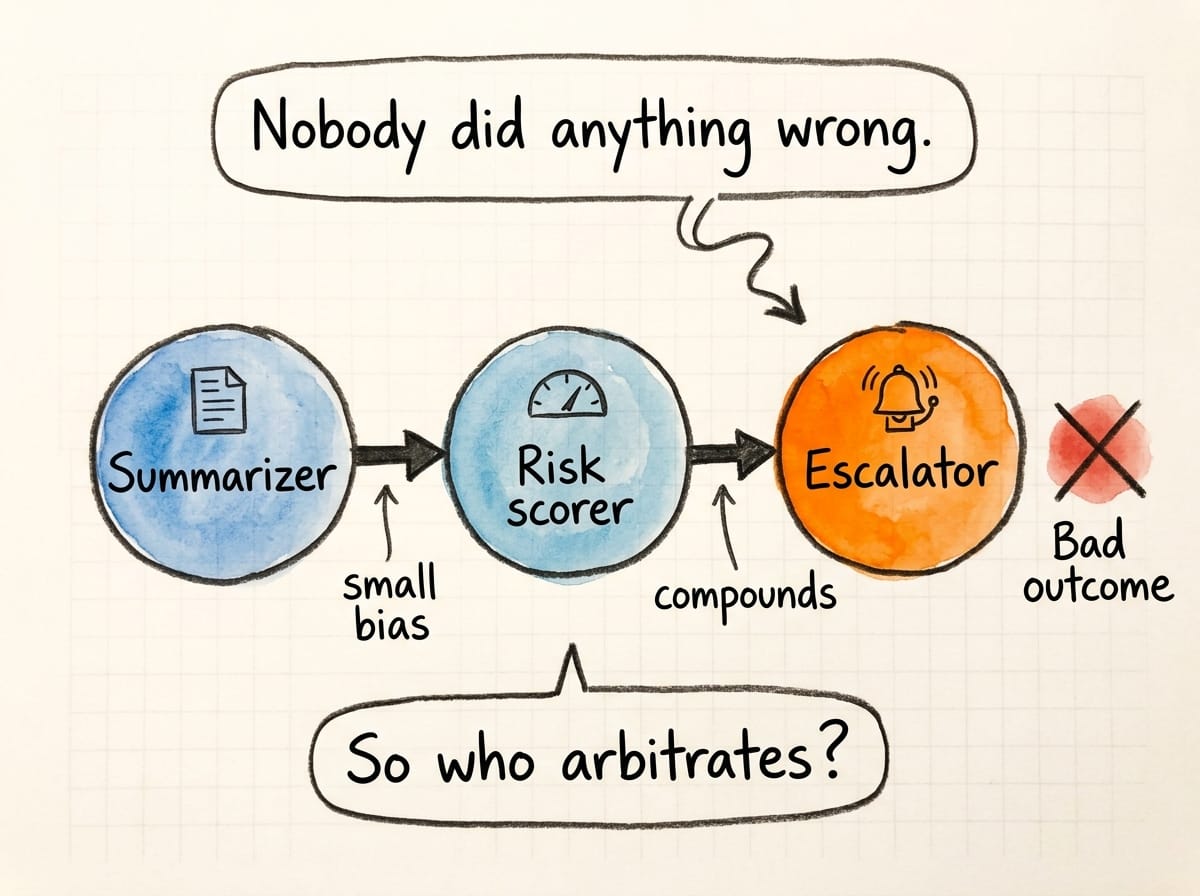

The second break is emergent behavior. Agent-A feeds agent-B; agent-B drives a decision in agent-C. You get chains. Chains drift. An early summarization bias compounds into a risk-scoring bias into an escalation miss. Nobody in the chain did anything wrong. The system produced a bad outcome. Who arbitrates? Who audits? Who decides the chain is broken versus just unlucky?

The third break is the scope of human-in-the-loop. Old program management: humans decide, tools execute. New: agents decide within bounded autonomy, humans decide where the boundaries sit. The hard question isn't "should a human approve this?" It's "what class of decisions, at what delegation level, gets human review – and how often do we revisit the boundary?"

None of this is a platform engineering problem. Platform engineering builds the substrate. This is coordination-and-governance work – the job description of a senior TPM. The question is whether the TPM community claims it or cedes it to a new role that doesn't exist yet but will.

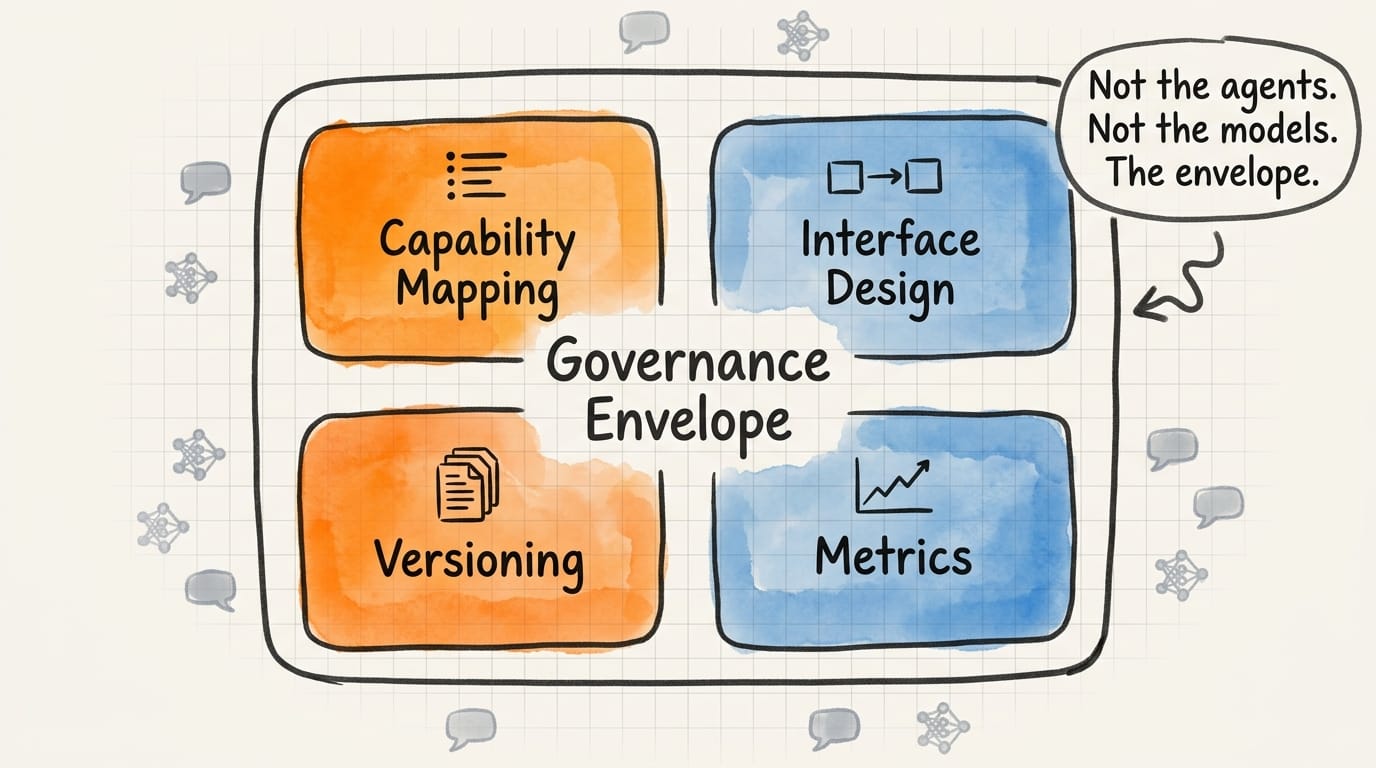

The Governance Envelope

What TPMs own in the agentic era is the governance envelope around human-agent programs. Not the agents – engineers build those. Not the models – data science evaluates those. The envelope: policies, interfaces, checkpoints, and metrics that let a program tolerate non-determinism without blowing up.

Here's what that looks like on a Tuesday. Your team has a risk agent that scans the sprint board nightly and flags teams at risk of missing commits. Six weeks in, it flagged three teams. Two were right. One was wrong – the team had rebalanced capacity mid-sprint and the agent missed the comment thread where they'd discussed it. The PM who got the false flag is annoyed. The agent is still useful. What do you do?

You don't patch and hope. You write down the failure mode, tag it as a class of error, and add it to an incident log you review weekly. Unglamorous. That is the job.

Four moving parts hold the envelope together. Capability mapping comes first: list the decisions your program makes without AI today, tag each with who owns it, the cost of a wrong answer, and the cost of a missed answer. Agents belong at the intersection of low cost of wrong and high cost of missed. Start anywhere else and you're building exposure you don't need.

Interface design is where most teams quietly fail. Agents have to know when to escalate to other agents, when to escalate to a human, and when to fail quietly. The default most teams ship with – "escalate everything to a human" – rebuilds the bottleneck you were trying to remove. A tiered escalation path with explicit SLAs works better, and negotiating those SLAs across teams is already your job.

Versioning is where non-determinism bites. You can't rely on unit tests the way engineers do. You can maintain a reference dataset – real program inputs from the last two quarters – and replay them against the current agent. Not checking for identical outputs; checking drift in the distribution of outputs. If last quarter's agent flagged 8% of sprints and this quarter's flags 22%, something changed. Know what, before it ships.

Metrics close the loop. Track how often agents are right, how often humans override them, and the override trend over time. A rising override rate usually isn't eroding trust – it's agents handling decisions that drifted outside their scope. That's diagnostic information you want.

None of this is exotic. It's the discipline senior TPMs already apply to cross-team dependencies and risk registers, pointed at a different kind of system.

Action Steps

This week, earn the prerequisites. Most TPMs reading this don't have production SDK access, security sign-off for agent pilots, or budget for tooling. That's the honest starting point, and skipping past it is how pilots die. Find out who owns AI governance in your org – probably a security director, a data governance lead, or a chief AI officer who got the title six months ago. Book thirty minutes. Don't pitch. Ask what they're worried about and what they'd need from a TPM-led pilot to greenlight one. Then read your company's acceptable-use policy for AI end to end. The text matters when you write the proposal.

Month one, pick your first envelope, not your first agent. The instinct is to go build something – resist it. Take one program you run and write down, in a single doc, the decisions where an agent could plausibly help, the decisions where it should not, and the boundaries between. Share it with your engineering partner, your security contact, and your most skeptical peer. If nobody pushes back, you wrote it too vague. Iterate until someone disagrees on a specific boundary. That disagreement is where governance work starts.

By the end of Q1, run one small agent in parallel with an existing human process and review it weekly. The point isn't comparing outputs – it's building the forensics muscle before stakes are real. Document every agent-human disagreement and figure out why. Most of what you learn will be about your process, not the agent.

Q2 is when you stop being the only person thinking about this. Bring three or four peers together – other senior TPMs, your security contact, someone from legal or compliance – and walk through what you've learned. You're not presenting a framework. You're reproducing the week-one governance conversation, except now you have six months of data.

Essential Resources

Microsoft Agent Framework on Microsoft Learn – Official docs for the open-source .NET and Python SDKs, including graph-based orchestration primitives and agent hand-off semantics.

Anthropic's Model Context Protocol – The emerging standard for how agents discover and use tools. Worth understanding even if your stack is Microsoft-first.

AI Tools for Technical Program Managers – Practitioner guide to integrating predictive analytics into program workflows, from inside the role.

Best AI Project Management Platforms 2025 – Comparative analysis of platforms offering risk forecasting, useful for procurement conversations.

TPM Academy AI Transformation Guide – How TPM responsibilities shift as AI becomes infrastructure.

The governance envelope isn't a framework you deploy. It's a posture you hold. The TPMs who hold it first shape how their organizations think about agent risk for the next decade. Pick the envelope before you pick the agent.