The Orchestration Imperative: Why the Governance Gap Is Your Strategic Moment

When GPS rendered harbor pilots' route memorization obsolete, the reasonable prediction was extinction. What happened instead: vessel traffic increased, ships got larger, and harbor pilots became more essential – not less. Their role shifted from route knowledge to real-time risk integration, traffic management, and environmental assessment. The mechanical layer disappeared. The judgment layer expanded.

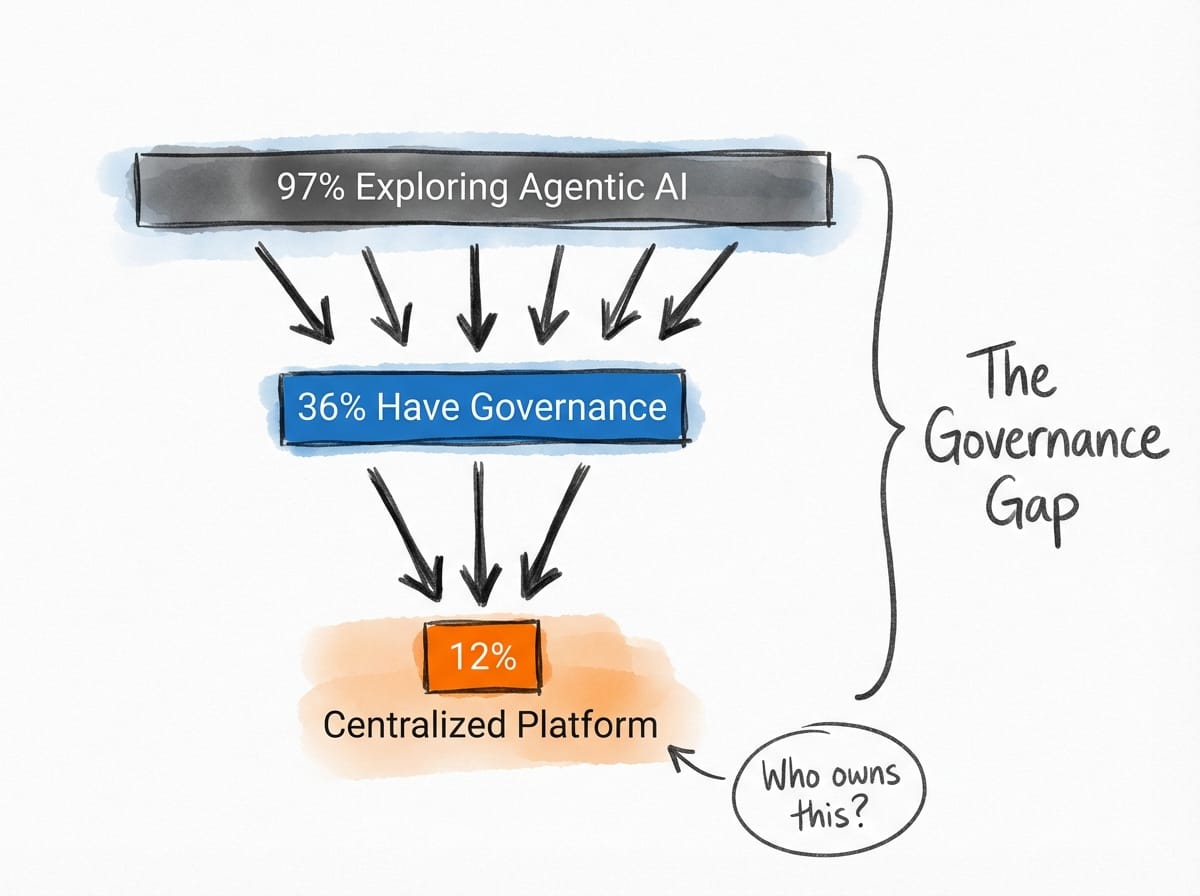

The same structural shift is hitting the TPM role right now. AI is automating the coordination layer – dependency tracking, status aggregation, risk identification – and the complexity of what remains is increasing. The governance gap tells the story: 97% of organizations are exploring agentic AI strategies, but only 36% have centralized governance, and just 12% use a centralized platform.

Five Signals This Week

1. The governance gap is quantified – and widening. That 97%/36%/12% split is a portfolio-level risk that nobody in most organizations currently owns. It compounds: 66% of leaders find building human-in-the-loop checkpoints technically difficult. This isn't a tooling gap. It's a workflow design gap – and workflow design is what TPMs do.

2. Agentic platforms are shipping faster than governance can follow. Anthropic launched Claude Managed Agents in public beta with secure agent sandboxing. Google is testing an "Agent" feature for Gemini Enterprise. The Agentic AI Foundation crossed 97 million MCP installs in March. Every one of these announcements widens the gap from Signal 1 – more capable agents, same absent governance.

3. So why does this matter now, specifically? Because 71% of CIOs must prove AI value by mid-2026 or face budget cuts. Meanwhile, 74% of AI's economic value is concentrated in just 20% of organizations (PwC). The experimentation phase is expiring. Organizations still running pilots will be defunded; organizations with governance in place will scale.

4. Orchestration as a Tier 1 skill. Agentic workflow design and AI governance are listed as differentiating skills – high demand, limited supply. The explicit career trajectory from the data: AI consumer to AI orchestrator. That's not a rebrand. It's a scope expansion into the governance vacuum that Signals 1 through 3 describe.

5. And the adoption gap hits close to home. 45% of companies struggle to integrate AI with existing PM tools. 41% of project managers flag AI adoption as a challenge. That includes us. The tools TPMs use to manage programs are behind – which might be the most direct evidence that this is a program management problem, not a vendor problem.

Strategic Deep Dive: The AI Orchestration Mandate

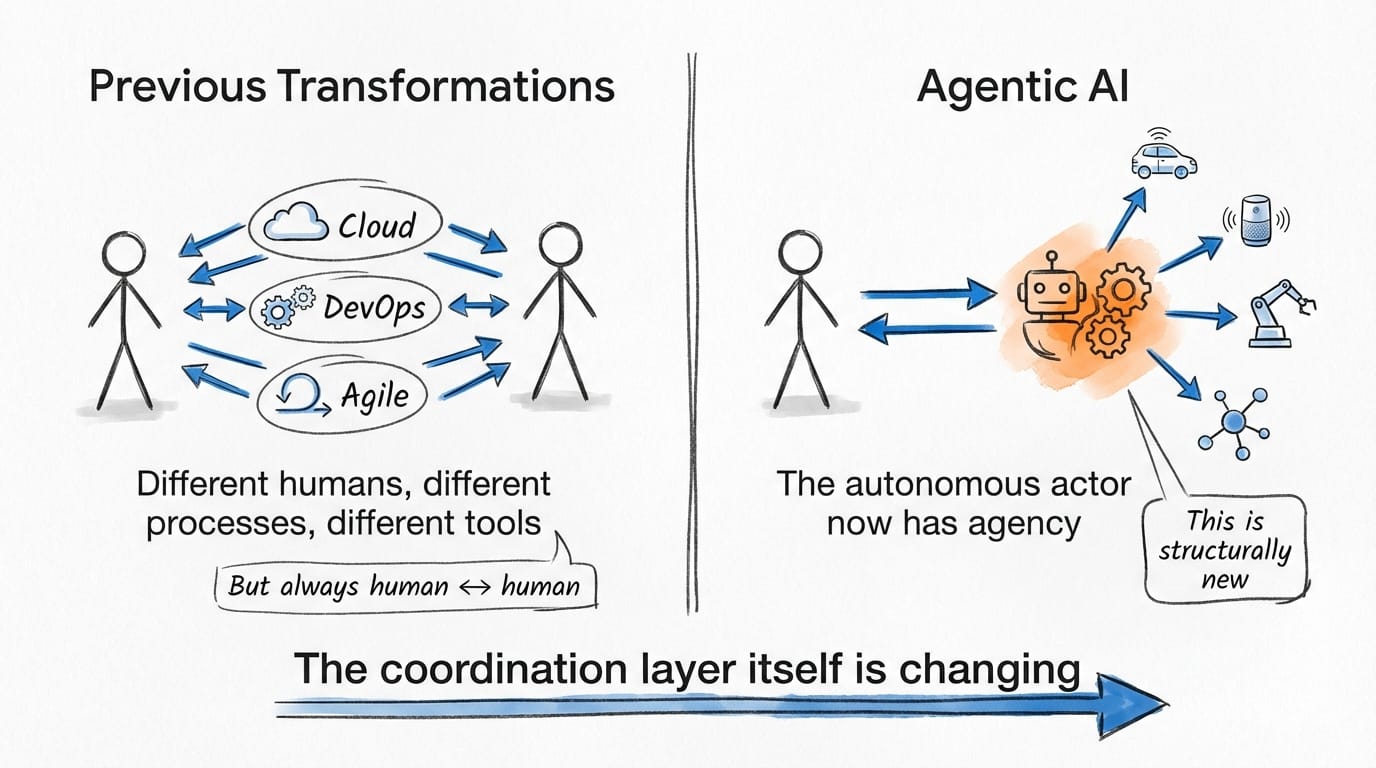

The coordination layer is changing in a way it hasn't before. Cloud migration, DevOps, agile – those transformations reshuffled who coordinated and how. DevOps didn't just change tooling; it moved deployment ownership from ops teams to engineering teams and collapsed entire handoff boundaries. But the autonomous actor in every prior transformation was always human. A different human in a different process with different tools, but human. Agentic AI introduces something structurally new: autonomous non-human systems that make decisions, take actions, and create dependencies without a person in the loop. The thing being coordinated now has agency.

Three reports this week converge on what happens when organizations miss this distinction. Enterprises are hitting a wall because they're automating existing processes rather than redesigning how work gets done. Deloitte's 2026 Tech Trends research captures the pattern – and the implication, as I read it, is that the gap isn't in capability but in design. Only 46% of AI proofs of concept reach production. 79% of organizations face AI adoption challenges – a double-digit increase from 2025. And then the stat that should stop you: 54% of C-suite executives admit AI adoption is "tearing their company apart." This is a design problem. Scoping work, mapping dependencies, building governance structures, aligning stakeholders around execution – that maps to what TPMs already do.

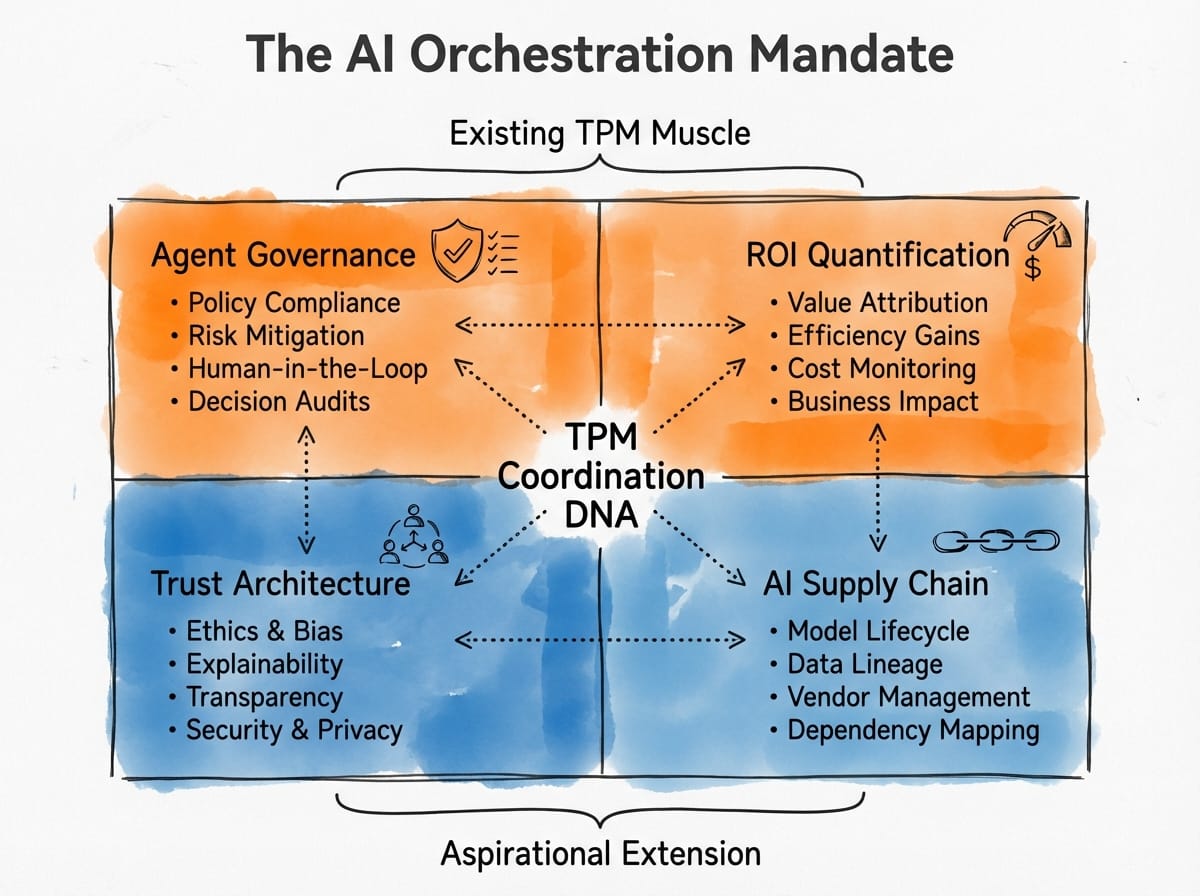

Four domains emerge from this convergence.

Agent Governance. Right now, in most organizations, nobody owns the risk register for autonomous agents. Escalation protocols, HITL checkpoints, dependency mapping between agent outputs and human decisions – these exist in some form for human-run programs. They're almost entirely absent for agent-run ones. The 66% of leaders struggling with HITL checkpoints aren't facing a technology problem; they're facing a workflow design problem that compounds at portfolio scale. Every ungoverned agent is an untracked dependency.

ROI Quantification. The pressure is specific and imminent: 71% of CIOs face a mid-2026 deadline to prove AI value or lose budget. With 74% of economic value concentrated in 20% of organizations (PwC), most companies are capturing significantly less value than the top performers – and many can't articulate why. Building the measurement frameworks that protect budgets and justify scaling isn't a stretch assignment. It's the outcome measurement TPMs already own, pointed at a new target.

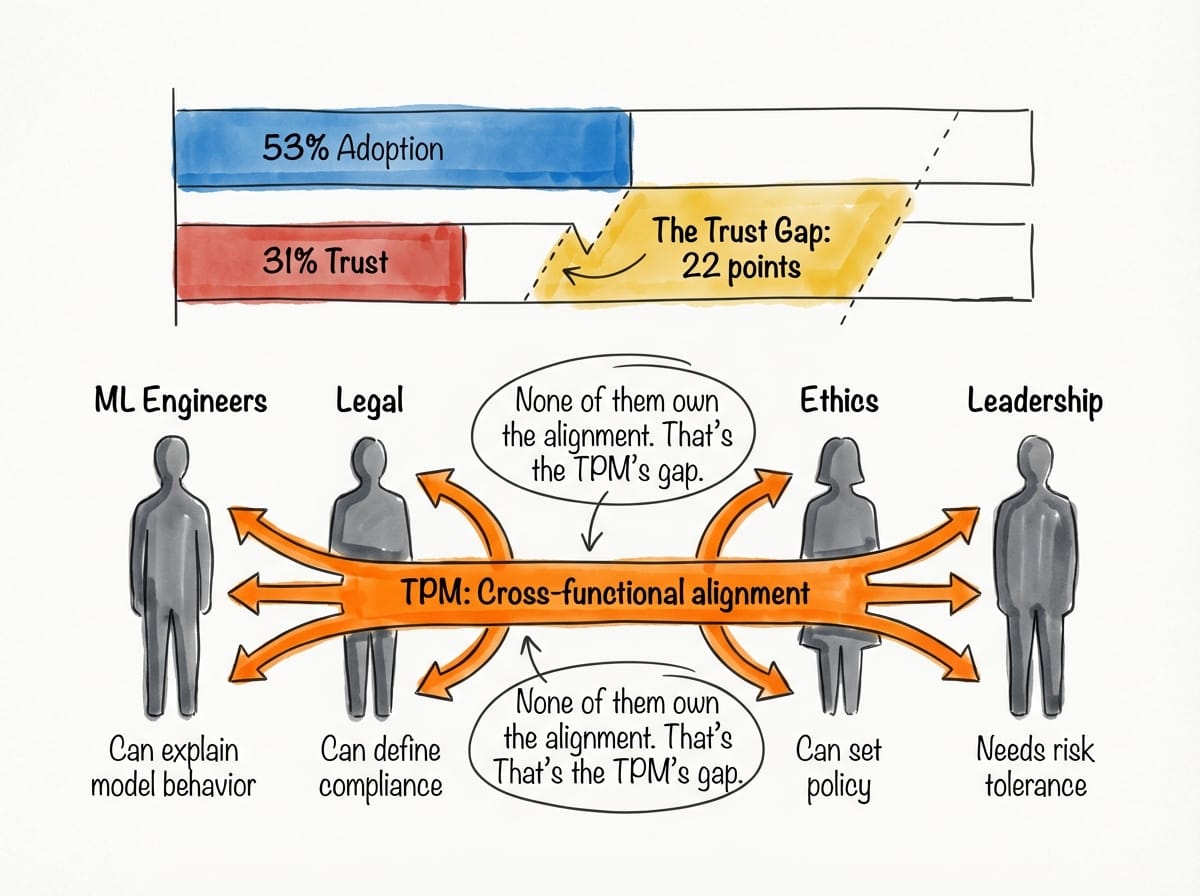

Trust Architecture. Stanford's AI Index shows 53% adoption but only 31% trust – meaning roughly a third of the people using AI tools at work don't trust what they're getting. Models are measurably better than they were eighteen months ago, so this isn't a capability problem. It's a visibility problem: decisions happening inside a black box with no stakeholder line of sight into why. ML engineers can explain model behavior. Legal can define compliance boundaries. Ethics teams can set policy. But none of them own the cross-functional alignment that makes trust operationally real – translating between ML's technical concerns, legal's compliance requirements, and leadership's risk tolerance. That's the TPM's gap to fill: designing transparency and accountability into agentic workflows so the people who need to govern these systems can actually see what they're governing.

AI Supply Chain Management. The vendor landscape is fragmenting fast. Claude Sonnet 4.6, GPT-5.4, and Gemini 3.1 Pro are all leading different benchmark categories. Anthropic, OpenAI, and Google announced a joint intelligence-sharing initiative focused on combating model copying. Which model fits which workload? That's a procurement and risk decision, not a research decision – and it needs to happen faster than traditional procurement cycles allow. Your VP of Engineering probably doesn't have an AI vendor dependency map – and would want one.

This isn't four new jobs. It's the existing TPM job description applied to a coordination layer that now includes autonomous systems.

Your Monday Morning

1. Audit your portfolio's agent exposure this week. Map which programs are deploying or planning autonomous agents. For each, identify who owns the escalation protocol, who defines HITL checkpoints, and whether governance is centralized or ad hoc. If nobody owns it, propose yourself.

2. When your next portfolio review comes up, add "AI Program ROI Framework" to the agenda. Bring the 71% CIO stat. Propose a standardized measurement template for AI initiatives that maps to business outcomes your leadership already tracks.

3. Your VP of Engineering probably doesn't have an AI vendor dependency map – and would want one. Put it together by end of month. Catalog which models and platforms your org depends on, who owns each vendor relationship, what the switching costs look like. Frame it as supply chain risk; that framing will land faster than "AI governance."

4. Read your org's AI governance policy this week. If one exists, great – find the gaps. If it doesn't, that's your finding. Bring it to your next leadership sync as a gap, not a criticism.

Essential Resources

- Deloitte 2026 Tech Trends: Agentic AI Strategy – The "redesign, not automate" insight that frames the governance gap

- Stanford AI Index 2026 – Adoption vs. trust data underlying the Trust Architecture domain

- PwC 2026 AI Performance Study – Value concentration data (74%/20%) that makes the ROI case

- Anthropic Claude Managed Agents – Documentation for the agentic platform TPMs should understand first

- Seven Ways the TPM Role Is Evolving in 2026 – TPM University – Career context for the orchestration shift

- Where Is Technical Program Management Heading in 2026? – Art of TPM – Practitioner perspective on signal vs. noise in 2026