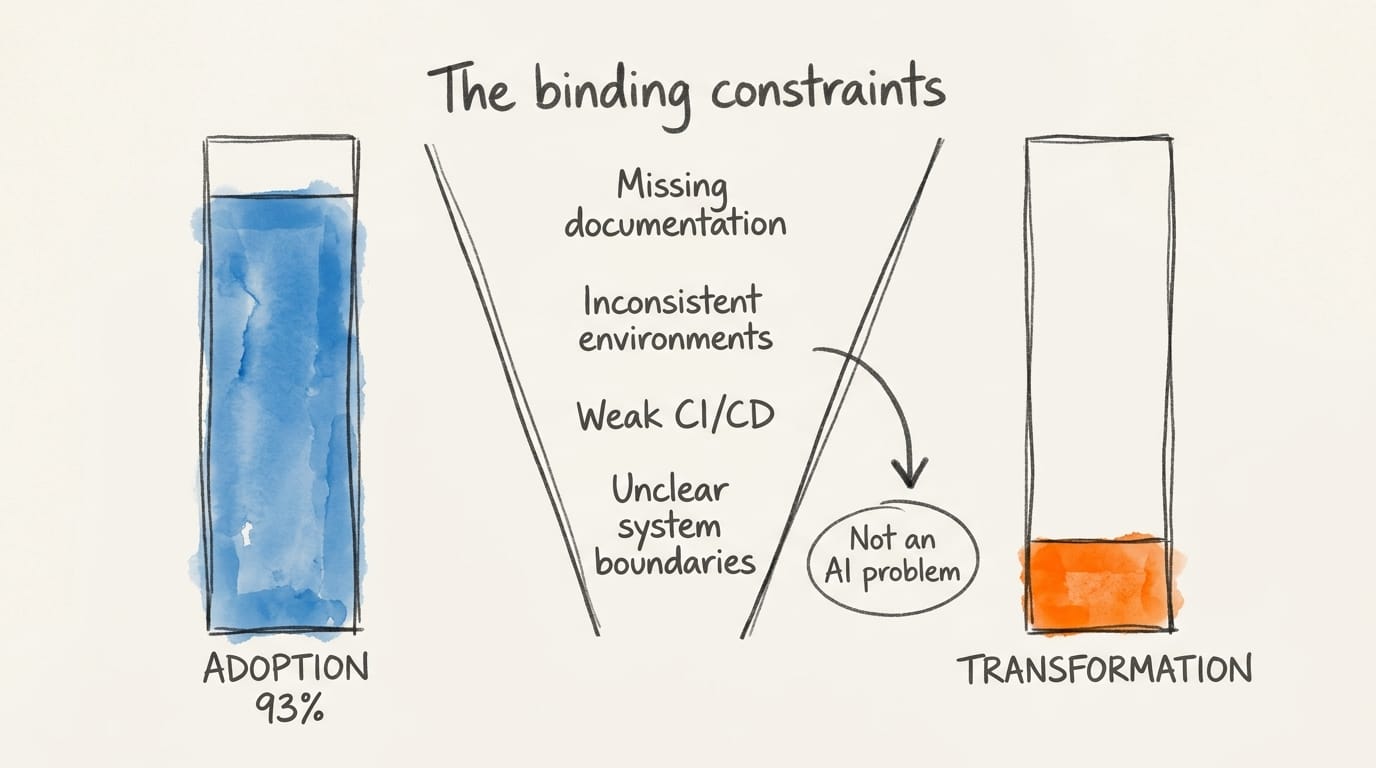

Electric motors were widely adopted in American factories between the 1890s and 1905. Productivity stayed roughly flat for twenty years, because the shafts-and-belts architecture the motors were bolted into had to be torn down and rebuilt – unit drives, single-story material flow, a different shape of work – before the gains showed up. The 93% AI adoption figure in DX's Q1 2026 report is the 1905 electric motor.

Adoption is not the lever this year. The architecture of work the adoption runs through is, and that architecture is where the TPM can make a defensible contribution: a one-page register that binds operational debt to blocked transformation outcomes at the portfolio level, and scope arbitration at the agent-output boundary, where write-speed is outrunning review capacity.

Signals This Week

1. Adoption hit the ceiling. Transformation didn't follow. DX's Q1 2026 Impact Report puts AI adoption in engineering organizations at 93%. The binding constraints DX names are not AI problems: missing documentation, inconsistent environments, weak CI/CD, and unclear system boundaries. The blast radius crosses team boundaries. No single team owns it, which means the substrate lives in the portfolio.

2. If half your teams are at agentic cadence, what is your planning cadence?

MIT Technology Review surveyed 300 engineering and technology executives: a 37% average predicted delivery speed boost from agentic AI, 98% anticipating dramatic acceleration. Half of surveyed teams report using agentic AI in some form. Planning cadences set at the portfolio level have not moved in proportion. That gap is where coordination debt accumulates.

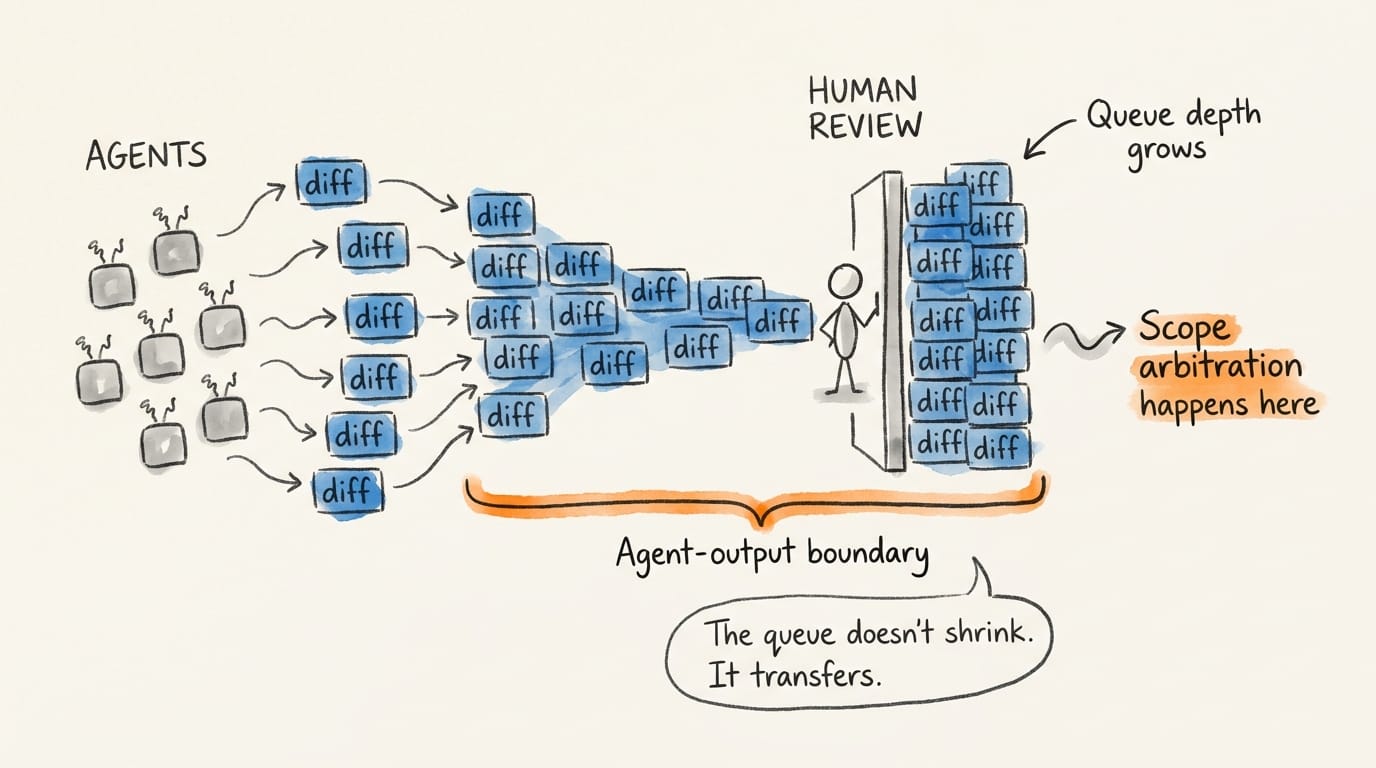

3. More diffs per hour than humans can review – and the queue is growing. Anthropic's 2026 Agentic Coding Trends Report, based on first-party usage data, finds AI raises output volume more than it raises speed. Engineers use AI in roughly 60% of their work but can fully delegate only a small fraction. Multi-agent orchestration – an orchestrator delegating subtasks to specialized agents working in parallel across separate context windows – is displacing single-agent workflows. The queue doesn't shrink. It transfers to the agent-output boundary, where generated work meets human review.

4. 82 agents per human employee. 10% of organizations know what to do about it. Fortune reports AI agents outnumber humans 82-to-1 in hybrid workforces. OutSystems finds 96% of enterprises using agents and 10% with a clear strategy. Gartner forecasts 40%+ of current agentic projects will be cancelled by 2027 on cost and complexity. Those cancellations will hit portfolio critical paths – coordination and governance work a TPM can credibly produce.

Four signals, one pattern.

Strategic Deep Dive: The Transformation Debt Register

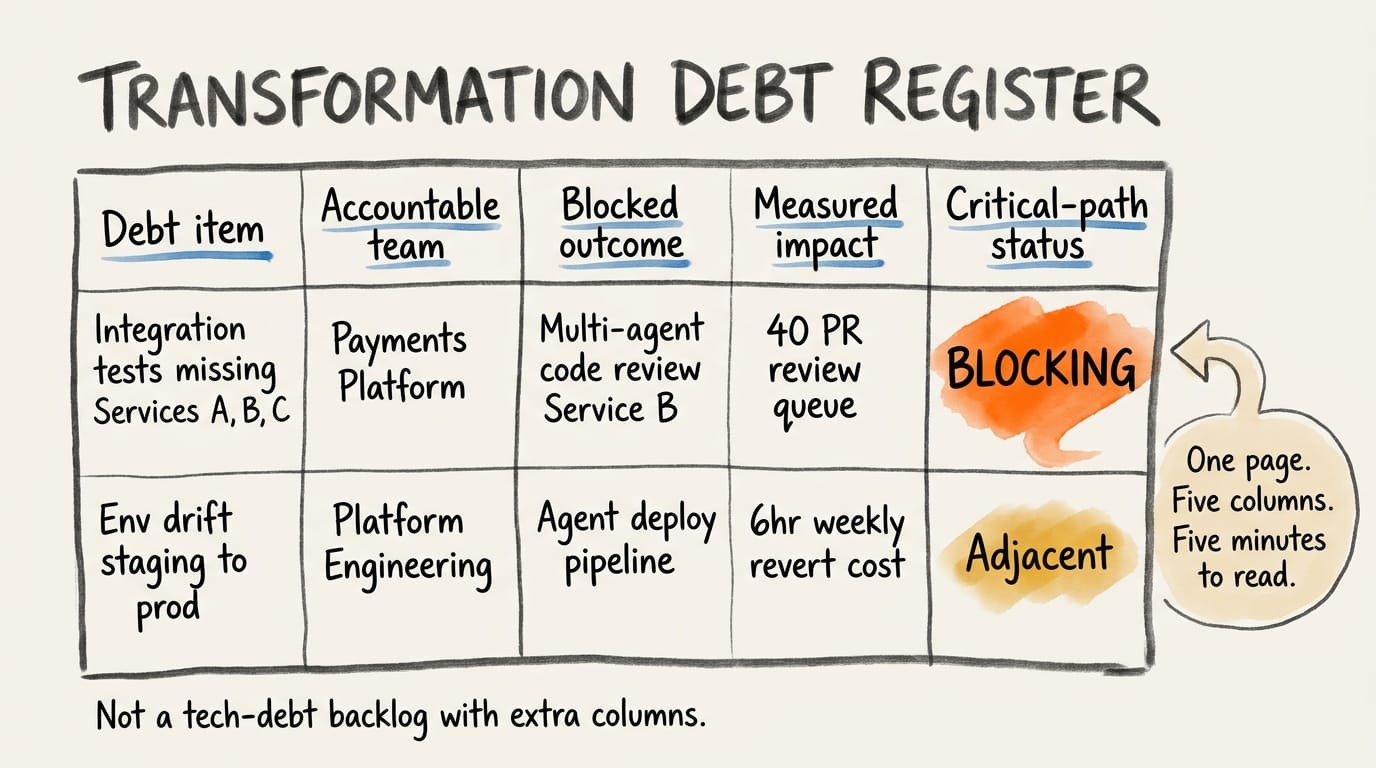

Put the artifact on the table first. One page, five columns:

| Column | Content |

|---|---|

| Debt item | Specific – not "poor testing" but "integration tests absent on Services A, B, C." |

| Accountable team(s) | The team or teams on the hook – the one named in a post-mortem, not the RACI list. A function name fails here; "Platform Engineering" means nobody specifically committed. |

| Blocked transformation outcome | Name the agentic capability this debt is preventing the way you would name a program deliverable. "Multi-agent code review on Service B" reads. "Agentic capability" doesn't. |

| Measured impact | Hours of review overhead, revert rate, escaped defects, deferred roadmap items – whatever your org already tracks. |

| Critical-path status | One of three: blocking a named program this quarter, adjacent-within-quarter, or latent. The column that forces triage. |

One page. Portfolio view. Read by leadership in five minutes.

The Register fails when it exceeds one page, when "blocked transformation outcome" is abstract rather than specific, or when "critical-path status" isn't tied to a named program. That last failure is the most common and the most fatal – it's the column leadership will test first.

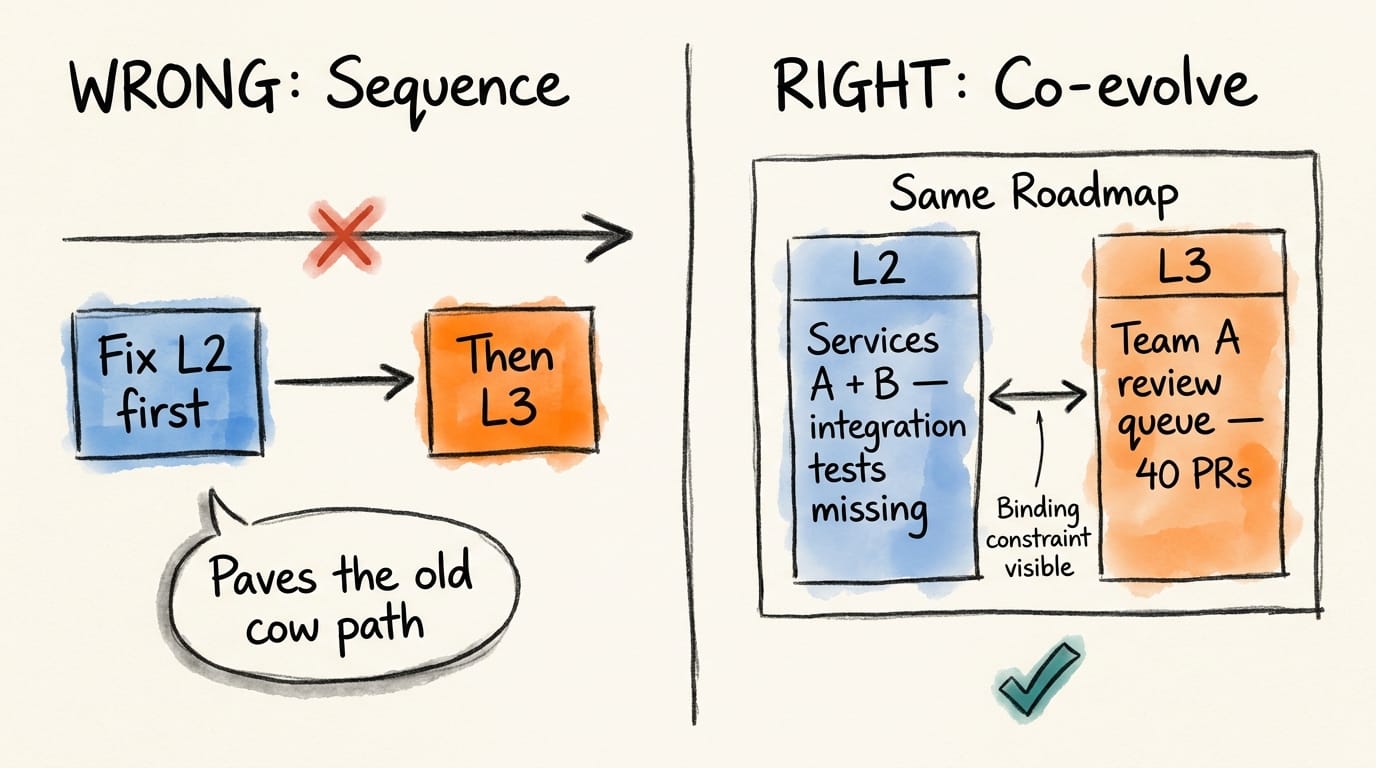

L2 and L3 do not sequence. They co-evolve.

Fixing CI before deploying agents paves the old cow path. Deploying agents on broken CI multiplies the review queue until it collapses. Call the operational substrate L2 – CI/CD, environment consistency, system boundaries, documentation – and the agentic delivery architecture L3 – agentic workflows, multi-agent orchestration, review-loop architecture. They don't sequence. They co-evolve, and the Register's job is to show on one page which L2 debt items are blocking which L3 outcomes.

The novelty is that L2 debt and L3 queue show up on the same artifact in the same quarter. Team A ships agent-assisted feature work while Services A and B still lack integration tests. The Register flags Team A's review queue at 40 PRs and the missing integration tests on Services A and B as what's keeping a human in every loop. Same roadmap, same artifact.

Rival claimants, and the political posture

Transformation Offices produce transformation roadmaps. Engineering Effectiveness teams produce DORA/DX dashboards. Chiefs of Staff to the CTO produce capability heatmaps. None currently produce a one-page artifact that binds operational debt to blocked transformation outcomes at the portfolio level with critical-path status – the combination is the gap. Take it as contributor-not-owner: your name on the artifact, not your headcount on the team. Naming another team's debt in a leadership-visible one-pager is a political act.

Route the Register through a VP or CIO sponsor, and frame it as risk surfacing rather than team critique. Co-authoring with Engineering Effectiveness gives the artifact cover.

Scope arbitration at the agent-output boundary

Volume grows where generation is cheap. Reviewers stay expensive. That mismatch is not a throughput problem you can solve by adding reviewers – you cannot hire humans faster than agents write diffs. It is a scope problem: which diffs merit a human, which ship on automated signals, and which programs get the scarce review capacity this quarter. Review capacity competes across programs, so allocation has to happen at the portfolio level.

That is the seat a TPM can credibly take. Portfolio-level scope arbitration – which programs get review capacity this quarter – is not the same as team-level scope arbitration, which PRs merge today. The former needs a view across programs; the latter lives inside one. At the portfolio level, the TPM sets the commit criteria: review-capacity allocation across programs, the gate distinguishing human-reviewed from automation-signaled merges, and the scope-freeze point that prevents agent-generated sprawl from swamping downstream integration. If your org doesn't yet measure this, reading external benchmarks into portfolio-level implications is a first-quarter deliverable on its own.

Pick deliberately

For most readers, the Register is a first-quarter artifact – evidence for your next review cycle that you saw the binding constraint and produced the one-pager leadership could act on. For the smaller share whose organizations will institutionalize this work, you're building continuous operational-fitness discipline at portfolio cadence, at director or VP level. The first path costs nothing. The second requires naming another team's debt in a leadership-visible artifact – politically expensive even when correctly done. Pick deliberately.

Your Monday Morning

1. Produce a first-draft Transformation Debt Register this week. One page. Pick your top three programs. Fill the five columns for the five debt items most likely to show up in a post-mortem six months from now. Legible beats exhaustive.

2. Find the agent-output boundary in one program. Measure it.

You probably don't have a number yet. That's the starting point. How many PRs or diffs are agents generating per week? Queue depth? Revert rate on agent-authored changes? If your org doesn't tag agent-authored commits, estimate from a one-week sample using CODEOWNERS or commit-author heuristics – the number matters more than the precision. Without a baseline, the scope-arbitration argument is yours in theory and nobody's in practice.

3. Name your rival claimants and talk to one this week. Transformation Office, Engineering Effectiveness lead, Chief of Staff to CTO – whoever exists in your company. Frame it plainly: "I'm producing this artifact. Here's what I need from you to make it accurate. Here's what it gives you in return."

- Bring the Register to your next portfolio review and ask your VP or CIO to pick the top debt item for next quarter's roadmap. That ask is decision-forcing. Putting something on the agenda isn't. One line of framing does the work in the room: "We are not sequencing operational fitness before delivery redesign. We are running them on the same roadmap and tracking the binding constraints." That's what inoculates your leadership chain against "fix the foundation first" – the argument that will stall this work if you don't pre-empt it.

Essential Resources

- DX Q1 2026 AI Impact Report – source of the 93% figure and the binding-constraint diagnosis. The empirical floor of the Register.

- Anthropic 2026 Agentic Coding Trends Report – the output-volume finding; anchor for the scope-arbitration argument.

- MIT Technology Review: Redefining the Future of Software Engineering – the 37% cycle-time prediction and the evidence that L3 is already under way at half of surveyed orgs.

- Fortune: AI Agents Are Acting Like Employees, But Companies Treat Them Like Software – the 82:1 ratio and the strategy gap.

- SnapLogic Announces AI Gateway and Trusted Agent Identity – infrastructure response to the governance side of the debt.